Mastering the Jacobian Matrix in Multivariable Calculus

Learn how the Jacobian matrix enables linearization, volume scaling, and essential applications in robotics and neural network backpropagation.

The Jacobian Matrix

The Gateway to Multivariable Calculus and Linearization

What is a Jacobian?

In vector calculus, the Jacobian matrix represents all first-order partial derivatives of a vector-valued function. It is essentially the multivariable equivalent of the derivative in scalar calculus, describing how a function changes locally.

Key Structural Components

The Matrix: An m x n matrix where m is the number of output variables and n is the number of input variables.

Rows: Each row represents the gradient of one of the scalar component functions.

Columns: Each column corresponds to the partial derivative with respect to one specific input variable.

Notation: Often denoted as J, D(f), or the partial derivative notation ∂(f)/∂(x).

Linear Approximations

The primary utility of the Jacobian is linearization. Just as a tangent line approximates a curve at a point, the Jacobian matrix provides a linear map that acts as the 'best linear approximation' of a non-linear function near a specific point.

The Jacobian Determinant

For square matrices (where inputs equal outputs), we can calculate the determinant of the Jacobian, denoted as det(J). This value gives important information about the behavior of the function near that point. It measures how much the function expands or shrinks volumes locally.

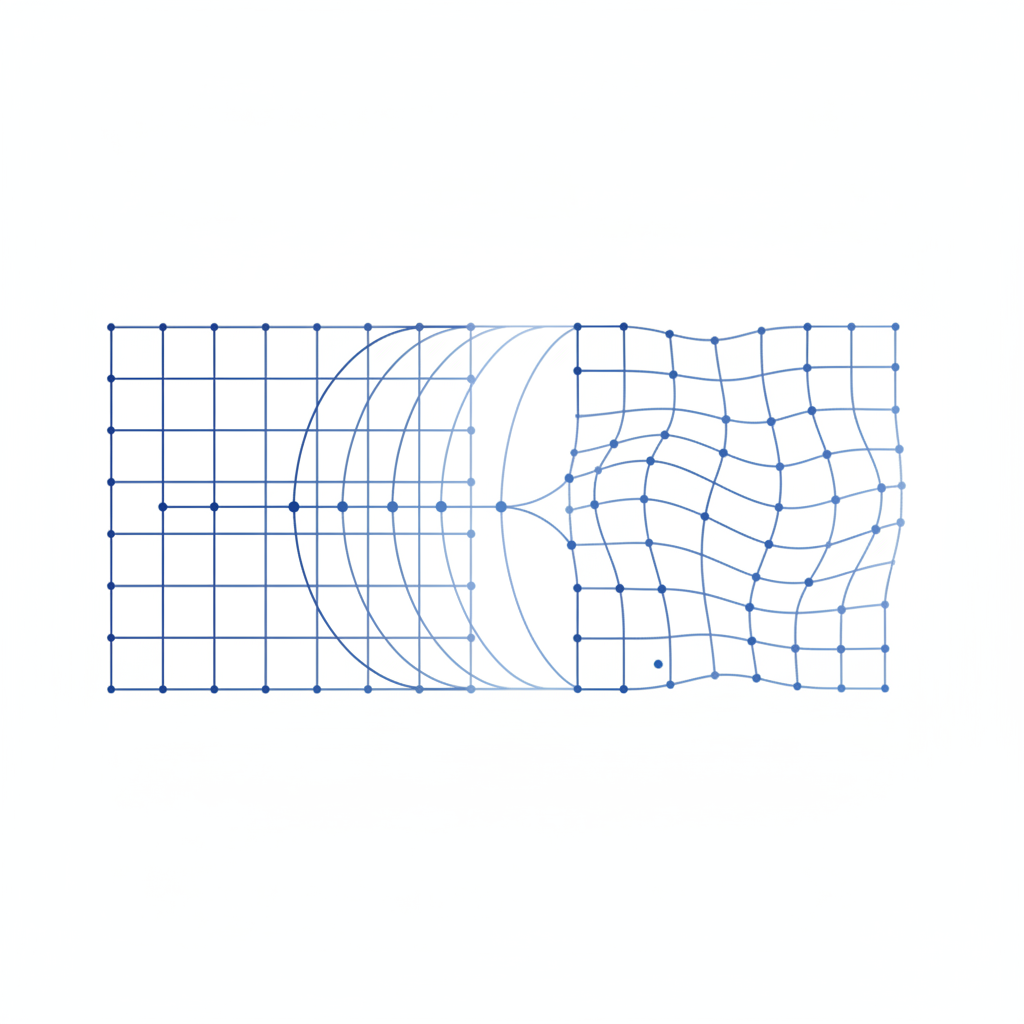

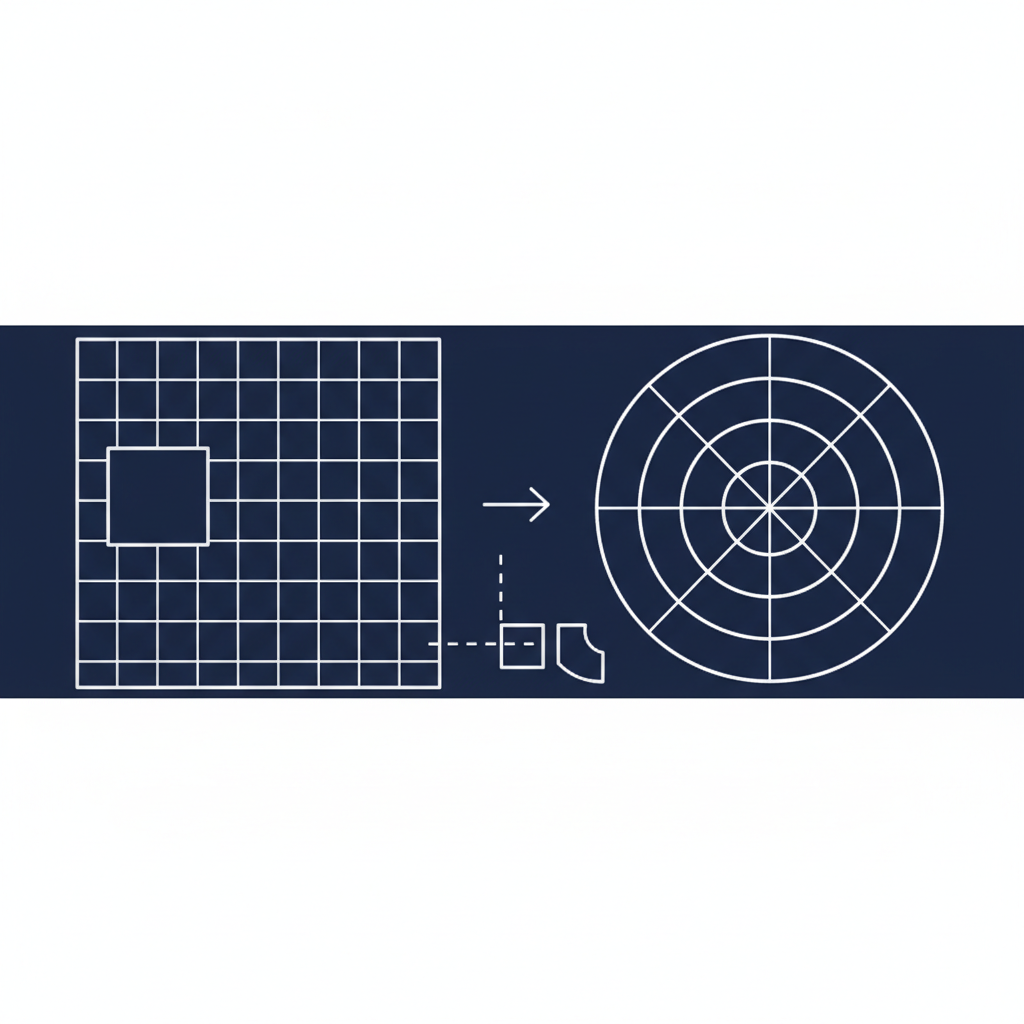

Area & Volume Scaling

When transforming coordinates (e.g., Cartesian to Polar), the 'unit area' changes shape. The absolute value of the Jacobian determinant acts as the scaling factor needed for integration, ensuring the calculation accounts for the distortion of space.

Application: Robotic Inverse Kinematics

Robotics & Control Context

In robotics, the Jacobian relates joint velocities to the end-effector (hand) velocity.

Equation: v = J(q) * q_dot, where v is Cartesian velocity and q_dot is joint velocity.

Singularities: If the Jacobian determinant is zero (robotic singularity), the robot loses a degree of freedom and cannot move in certain directions.

Deep Learning: Backpropagation

In neural networks, the Jacobian describes how the output of a network layer changes with respect to its inputs. During backpropagation, Jacobians of layer activations are multiplied (Chain Rule) to compute the gradient of the loss function, allowing the network to learn.

The Jacobian is the fundamental bridge effectively turning non-linear problems into linear ones locally, enabling solutions across engineering, physics, and data science.

Conclusion

- calculus

- linear-algebra

- robotics

- deep-learning

- mathematics

- engineering

- data-science