Machine Learning in Cyber Threat Detection | Advanced AI

Learn how to apply ML, Deep Learning, and Neural Networks to modern cybersecurity, from phishing detection to Zero Trust architectures and DDoS defense.

Machine Learning Techniques in Cyber Threat Detection

Advanced Approaches to Network Security

Introduction to Cyber Threats

Cyber threats are evolving rapidly, becoming more sophisticated and frequent. Understanding the nature of these threats is the first step in developing effective defense mechanisms using advanced technologies like Machine Learning.

Current Threat Landscape

Exponential growth in malware variants and ransomware attacks

Rise of zero-day exploits targeting unknown vulnerabilities

Sophisticated phishing and social engineering campaigns

Increasing complexity of Distributed Denial of Service (DDoS) attacks

Limitations of Traditional Defense

Traditional intrusion detection systems rely heavily on signature-based methods. These methods are effective against known threats but fail to detect novel attacks or polymorphic malware that changes its code to evade detection. This gap highlights the critical need for behavior-based approaches enabled by Machine Learning.

Core Machine Learning Categories

Supervised Learning

Models trained on labeled datasets (e.g., specific malware signatures).

Unsupervised Learning

Detects anomalies and patterns in unlabeled data without prior knowledge.

Semi-supervised Learning

Uses a small amount of labeled data to guide learning on larger unlabeled sets.

Reinforcement Learning

Adapts defense strategies dynamically based on attack feedback.

Supervised Learning Algorithms

Algorithms like Support Vector Machines (SVM), Decision Trees, and Random Forests are staples in threat detection. They excel at classification tasks, such as distinguishing between benign files and known malware based on features extracted from labeled training data. They provide high accuracy for known threat vectors.

Unsupervised Learning Applications

When facing zero-day attacks or insider threats, labeled data is often unavailable. Unsupervised techniques like K-Means Clustering and Anomaly Detection are vital here. They establish a baseline of 'normal' network behavior and flag any statistical deviations, identifying potential threats that have never been seen before.

Deep Learning and Neural Networks

Automates feature engineering, allowing the model to learn complex patterns directly from raw data.

Convolutional Neural Networks (CNNs) are effectively used for malware classification by treating code binaries as images.

Recurrent Neural Networks (RNNs) and LSTMs excel at sequential data analysis, ideal for identifying malicious behavior in network traffic logs.

Requires significant computational resources but offers state-of-the-art detection rates for complex threats.

Critical Data Preprocessing

Before training, raw cyber data must be cleaned and normalized. This involves handling missing values, encoding categorical variables (like protocol types), and scaling numerical features (like packet size) to ensure model stability and accuracy.

Feature Extraction Techniques

N-grams analysis for extracting byte code sequences in malware detection.

Analyzing API call sequences to understand program behavior and intent.

Extracting header information and flow statistics from network traffic data.

Dimensionality reduction (PCA) to handle high-dimensional sparse datasets efficiently.

Phishing Detection Systems

ML models analyze email headers, content body, and URL structures to detect phishing attempts. NLP techniques (like TF-IDF or BERT) process the text to identify urgent or suspicious language often used in social engineering attacks.

Malware Classification

Machine learning moves beyond signature matching to behavioral analysis. By executing files in a sandbox and monitoring system calls, models can identify malicious logic even in obfuscated or polymorphic code.

Intrusion Detection Systems (IDS)

Network-based IDS (NIDS) monitors traffic flow for anomaly detection.

Host-based IDS (HIDS) analyzes system internals like file integrity and log files.

Hybrid models combine both signatures and anomaly detection to reduce false positives.

Real-time processing using stream analytics for immediate threat mitigation.

Adversarial Machine Learning

Adversarial attacks introduce subtle perturbations to input data, designed to fool ML models into making incorrect classifications. This 'arms race' between attackers and defenders requires robust model training, usually involving adversarial training techniques where models are exposed to these attacks during the learning phase to build resilience.

Key Challenges in Deployment

Imbalanced Datasets: Real-world data has far more benign traffic than malicious, making training difficult.

Explainability (XAI): Black-box models (like Deep Learning) lack transparency, hindering trust in security ops.

Data Privacy: Analyzing sensitive network traffic raises GDPR and compliance concerns.

Concept Drift: The rapid evolution of malware means behavior patterns change constantly, requiring frequent retraining.

Future Directions: XAI & Federated Learning

Two major trends are shaping the future: Explainable AI (XAI) helps analysts understand why an alert was triggered, reducing alert fatigue. Federated Learning allows organizations to train collaborative models on decentralized data without sharing the actual sensitive data, preserving privacy while improving global detection rates.

Case Study: Predicting Financial Fraud

A major global bank implemented an LSTM-based deep learning system to analyze transaction sequences. The result was a 40% reduction in false positives compared to their legacy rule-based system, saving millions in operational costs and preventing significant fraud losses in real-time.

Banking Sector Implementation Outcomes

Case Study: Enterprise Ransomware Defense

Scenario: A multinational corporation faced repeated ransomware waves targeting legacy servers.

Solution: Deployed an unsupervised anomaly detection model on endpoint logs.

Outcome: The system identified abnormal encryption activities in early stages, isolating infected hosts automatically.

Impact: prevented network-wide encryption, limiting downtime to less than 1 hour.

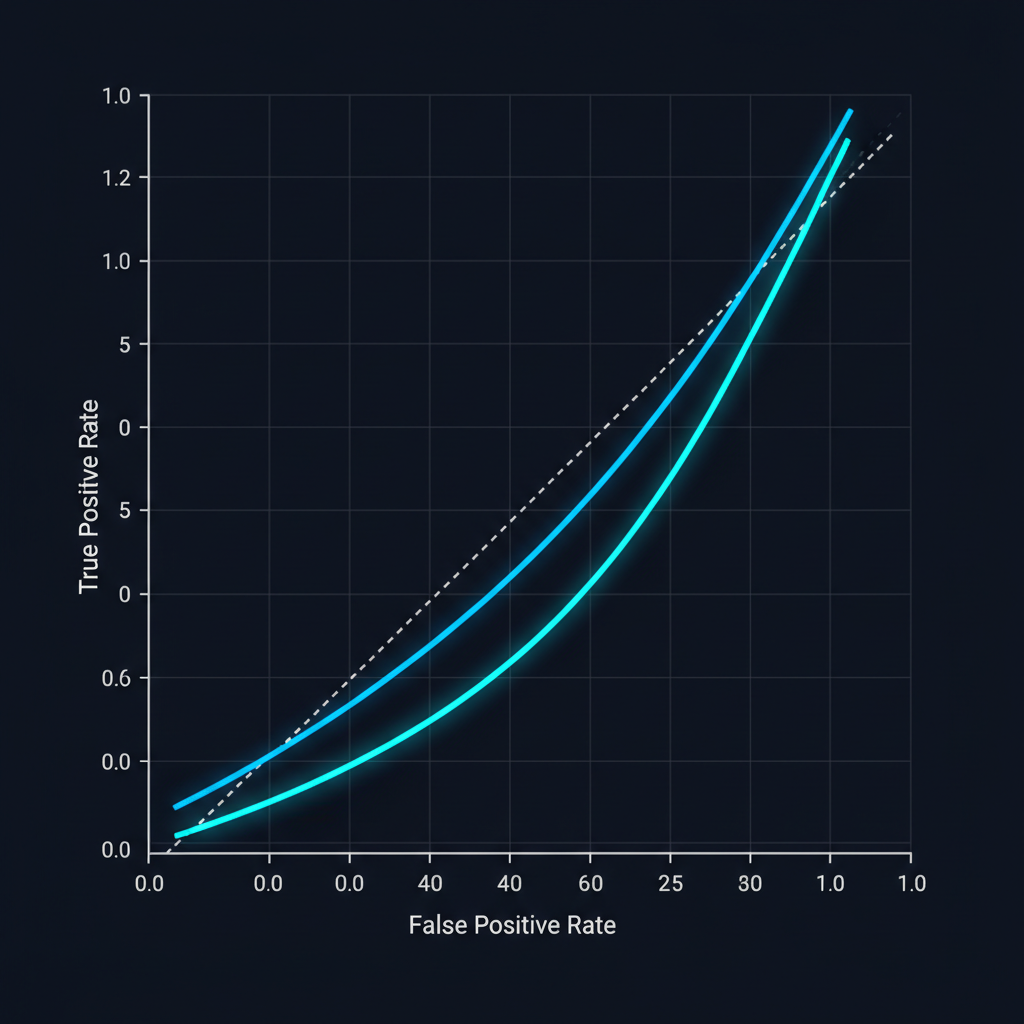

Evaluating ML Models in Cybersecurity

Why 99% accuracy can be misleading in threat detection and which metrics actually matter.

The Confusion Matrix

In cyber security, not all errors are equal. \n\n• True Positives (TP): Threat correctly blocked.\n• False Positives (FP): Legitimate traffic blocked (User frustration).\n• False Negatives (FN): Threat missed (Breach risk).\n• True Negatives (TN): Normal traffic allowed.

Key Performance Metrics

Precision: Of all blocked items, how many were actually threats? (Low precision = High False Alarms).

Recall (Sensitivity): Of all actual threats, how many did we catch? (Low recall = Missed Attacks).

F1-Score: The harmonic mean of Precision and Recall. Essential when data is imbalanced.

Accuracy: Often misleading in cyber data where 99.9% of traffic is benign.

ROC Curves & AUC

The Receiver Operating Characteristic (ROC) curve plots True Positive Rate vs False Positive Rate at different thresholds.\n\n• AUC (Area Under Curve): Single number summary.\n• 0.5 = Random guessing.\n• 1.0 = Perfect classifier.\n• Helps balance the trade-off between security and usability.

Tools & Frameworks

The essential software stack for building AI-driven defense systems.

The Python Ecosystem

Scikit-Learn: The industry standard for traditional algorithms (SVM, Random Forest, Clustering).

Pandas & NumPy: Essential for handling massive log files and high-dimensional network data.

Jupyter Notebooks: Critical for rapid prototyping and analyzing threat data interactively.

Matplotlib & Seaborn: Vital for visualizing attack patterns and feature distributions.

Deep Learning Powerhouses

For complex pattern recognition (e.g., malware binary analysis):\n\n• TensorFlow / Keras: Robust, production-ready framework ideal for deploying models at scale.\n• PyTorch: widely used in research for rapid experimentation with dynamic neural network architectures.\n• Both support GPU acceleration, essential for processing real-time network traffic.

Specialized Cyber ML Tools

Elastic Stack (ELK) + ML: Built-in anomaly detection for log data.

Splunk MLTK: Machine Learning Toolkit for enterprise security operations (SIEM).

Cuckoo Sandbox: Automated malware analysis tool that generates behavioural logs for ML training.

Weka: Older but useful suite of ML software for data mining tasks.

Data Sources for Cyber ML

Machine learning models are only as good as the data they are trained on. In cybersecurity, this data comes from diverse locations across the network and endpoints, requiring normalization and aggregation to build a comprehensive threat picture.

Network Traffic Analysis (NTA)

Packet captures (PCAP) and NetFlow data provide the ground truth for network communication. Features like packet size, inter-arrival time, and protocol headers are critical for detecting command-and-control communication and data exfiltration attempts.

Host-Based Data Sources

System Logs (Syslog, Windows Event Logs): Detecting authentication failures and privilege escalation.

File System Changes: Integrity monitoring for ransomware encryption activity.

API Calls: Analyzing the sequence of system calls made by running processes to identify malware.

Memory Dumps: Analyzing volatile memory (RAM) to detect fileless malware not present on disk.

The ML Lifecycle in Security

Deploying ML in security is a cycle, not a one-time event. It involves continuous data collection, model retraining, and validation against new threat intelligence to prevent 'model drift' as attackers change tactics.

Deployment & Monitoring Strategies

Inline vs. Passive: Choosing between blocking threats in real-time (IPS) and alerting analysts (IDS).

Latency Constraints: Models must run in milliseconds to avoid slowing down network traffic.

Explainability (XAI): Analysts need to know why a model flagged an alert to investigate effectively.

Feedback Loop: Analyst verdicts (True/False Positive) are fed back to retrain the model.

Deep Dive: SQL Injection (SQLi)

SQLi attacks inject malicious code into database queries. ML models (often using NLP or RNNs) analyze query structure to distinguish between legitimate user input and malicious payloads like 'OR 1=1', learning the grammar of valid SQL queries.

Deep Dive: DDoS Detection

Distributed Denial of Service (DDoS) attacks flood networks to exhaust resources. Anomaly detection algorithms track traffic volume and entropy (randomness). Sudden spikes in requests from widely distributed IPs trigger alerts or automatic rate limiting.

Advanced ML Models in Depth

Moving beyond the basics to understand the mechanics of detection algorithms.

Decision Trees & Random Forests

Trees split data based on feature nodes (e.g., 'Is file size > 1MB?'). Random Forests combine hundreds of these trees to reduce overfitting, making them robust classifiers for malware families.

Support Vector Machines (SVM)

Ideal for binary classification tasks (e.g., Malicious vs. Benign).

Finds the optimal 'hyperplane' to separate data classes with maximum margin.

Effective in high-dimensional spaces where data is complex.

Kernel functions allow it to model non-linear relationships.

Deep Neural Networks (DNN)

Simulated neurons are layered to learn complex patterns. Input layers accept raw data (pixels, bytes) which pass through multiple hidden layers to extract abstract features automatically, mimicking the human brain.

Gradient Boosting (XGBoost)

An ensemble technique that builds models sequentially. Each new model focuses on correcting the errors of the previous one. It is exceptionally fast and accurate for structured data like system logs.

Zero Trust Architecture & AI

'Never Trust, Always Verify' - The new security paradigm.

Core Tenets of Zero Trust

Assume the network is always hostile; no zone is 'safe'.

External and internal threats exist at all times.

Verify every request as if it originates from an open network.

AI is essential to handle the massive volume of continuous verifications.

User Behavior Analytics (UBA)

UBA establishes a baseline of normal user activity (login times, file access patterns). ML algorithms continuously monitor for anomalies or lateral movement that indicate a compromised credential.

In a Zero Trust world, identity is the new perimeter.

Security Axiom

AI in Cloud Security

Securing dynamic and ephemeral cloud environments.

The Shared Responsibility Model

The Cloud Provider protects the 'Cloud' (Hardware, Global Infrastructure).

The Customer protects what is 'in the Cloud' (Data, Access, Configurations).

AI bridges the gap by monitoring complex customer-side configurations.

Automated compliance checks are run at scale.

Detecting Cloud Misconfigurations

Simple errors (e.g., open S3 storage buckets) cause massive breaches. ML models scan Infrastructure-as-Code (IaC) and live environments to predict and flag high-risk settings before they are exploited.

Serverless & Container Security

Ephemeral workloads like AWS Lambda or Kubernetes pods live for seconds. Traditional antivirus fails here. ML analyzes system calls and runtime behavior to detect attacks in real-time, even in short-lived instances.

SOAR & Automated Response

SOAR: Security Orchestration, Automation, and Response.

ML triggers playbooks automatically (e.g., 'If confidence > 95%, block IP').

Drastically reduces Mean Time to Respond (MTTR).

Allows human analysts to focus on complex, novel threats.

Conclusion & The Future

The road ahead for AI in Cybersecurity.

Key Takeaways

Cyber threats are automated; our defense must be too.

Data quality is the most critical factor for model success.

Adversarial AI will be the next major battleground.

Security is not a product, but a continuous process of adaptation.

Thank You

AI is not a silver bullet, but it is an indispensable ally in the modern cyber war. Questions?

- cybersecurity

- machine-learning

- ai

- threat-detection

- network-security

- data-science

- deep-learning

- zero-trust