Designing Responsible AI Literacy for K-12 and Higher Ed

Explore strategies for scalable AI literacy in education. Learn about responsible AI use, mitigating bias, and frameworks for human-in-the-loop learning.

Designing Scalable and Responsible AI Literacy

Empowering Educators and Students in the Age of Generative AI

K-12 & Higher Education Strategy | 2026

The New Reality: Rapid Adoption in Schools

Adoption rates of Generative AI tools among educators versus students (2023-2025 Trends)

Students are adopting AI faster than institutional policy can adapt, creating an urgent need for structured literacy frameworks.

Defining Responsible AI Literacy

Bias & Fairness: Identifying algorithmic prejudice and ensuring equitable representation in outputs.

Privacy & Data: Understanding data training rights, input security, and student data protection.

Safety & Integrity: Mitigating hallucinations, misinformation, and safeguarding academic integrity.

Transparency: Recognizing when AI is being used and how decisions are made by 'black box' systems.

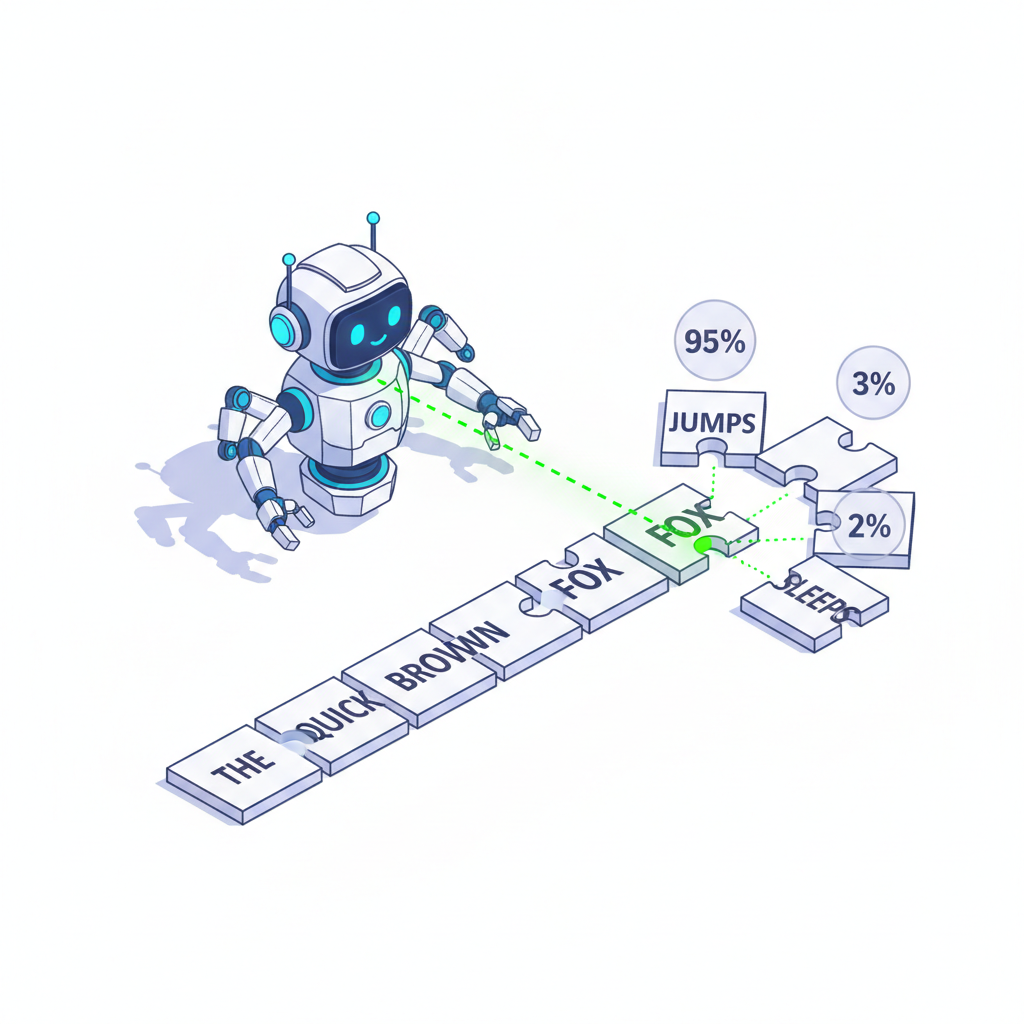

Demystifying GenAI: Prediction, Not Understanding

Generative AI models are 'Stochastic Parrots'. They do not understand truth or logic; they calculate the statistical probability of the next token (word/part of word) based on training patterns.

Input: 'The capital of France is...'

Process: AI analyzes millions of examples to find the highest probability completion.

Output: 'Paris' (Not because it knows geography, but because the pattern matches).

Risks: Educator Concerns vs. Student Reality

While educators often fear plagiarism most, the more insidious risks are silent hallucinations and the erosion of critical thinking skills when AI is used as a replacement for cognition rather than a scaffold.

Evaluating Use: Supporting vs. Undermining Learning

✅ Supports Learning (Scaffold)

• Brainstorming initial ideas / overcoming 'blank page'. • Generating counter-arguments to strengthen debates. • Simplifying complex texts for accessibility. • Creating practice quizzes for self-assessment.

⚠️ Undermines Learning (Crutch)

• Generating the final essay or reflection. • Summarizing texts without reading them first. • Solving math problems without showing process. • Accepting AI 'facts' without verification.

Equity and Bias: The Hidden Curriculum

AI models are trained on internet data that reflects historical biases. Without literacy, students may internalize these biases as objective truth.

Critical Considerations:

Representation Bias: Whose voices are missing in the training data?

Access Gap: Premium (smarter) models vs. Free (limited) models creates a two-tier education system.

Framework for Decision Making

Before assigning or permitting AI, ask:

Where is the thinking?

Does AI replace the cognitive struggle or enable higher-order thinking?

Is the data safe?

Does the task require inputting sensitive learner information?

Can we verify it?

Do students have the domain knowledge to spot hallucinations?

Operational Guardrails: The 'Human-in-the-Loop'

Attribution: If AI is used, it must be cited with the prompt and version.

Anonymization: Use generic identifiers ('Student A') in prompts, never real names.

Verification: The human user is 100% responsible for the accuracy of the output.

Moving from Fear to Agency

AI Literacy is not about learning to code; it's about learning to remain human in a digital world. Our goal is to use AI to amplify human potential, not automate the learning process.

Next Step: Audit one assignment next week using the 'Cognitive Struggle' framework.

- ai-literacy

- education-technology

- generative-ai

- k-12-education

- higher-education

- ethics-in-ai

- digital-citizenship