Graph Neural Networks for Traffic Forecasting | STGNN Guide

Learn how Spatial-Temporal Graph Neural Networks (STGNNs) solve complex traffic forecasting problems using deep learning and message passing architectures.

Graph Neural Networks: From Theory to Traffic Forecasting

A Deep Learning Approach to Spatial-Temporal Data Analysis

Based on research by Wu, Pan, Chen, Long, Zhang, Yu et al.

The Core Problem: Representing Graph Complexity

<b>Loss of Topology:</b> Traditional Machine Learning requires preprocessing that compresses graphs into flat vectors, losing vital structural connections.

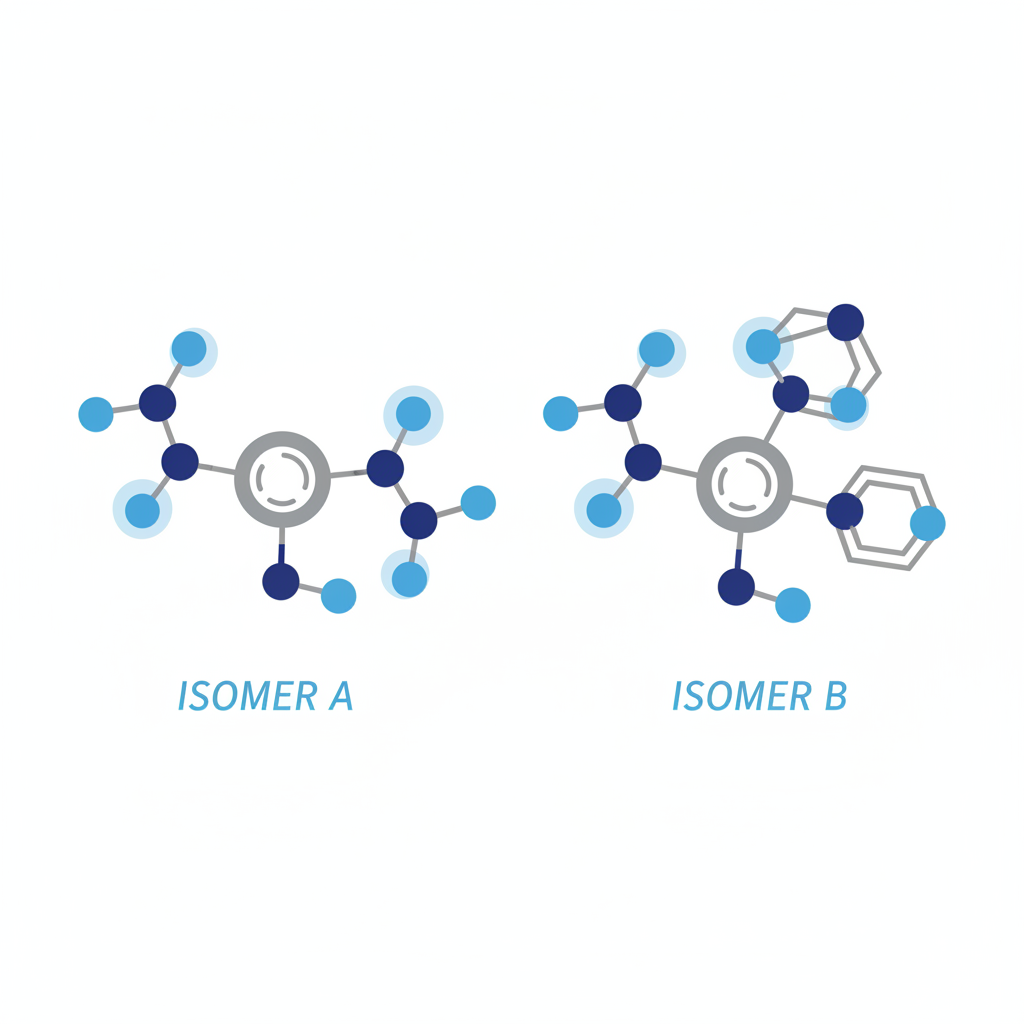

<b>The Isomery Challenge:</b> Different structures with identical components (like chemical isomers) appear identical to standard ML models.

<b>Recursive Limitations:</b> Early attempts (RNNs/Markov Chains) struggled with cyclic graphs and high computational complexity.

Taxonomy: The Evolution of GNNs

Recurrent GNNs (RecGNNs)

The pioneers. Learn node states via recursive information exchange until equilibrium.

Convolutional GNNs (ConvGNNs)

Generalizes convolution from images (grids) to graphs. Aggregates neighbor features. The modern standard.

Spatial-Temporal GNNs (STGNNs)

Captures both spatial dependencies (topology) and temporal dynamics (time). Critical for traffic forecasting.

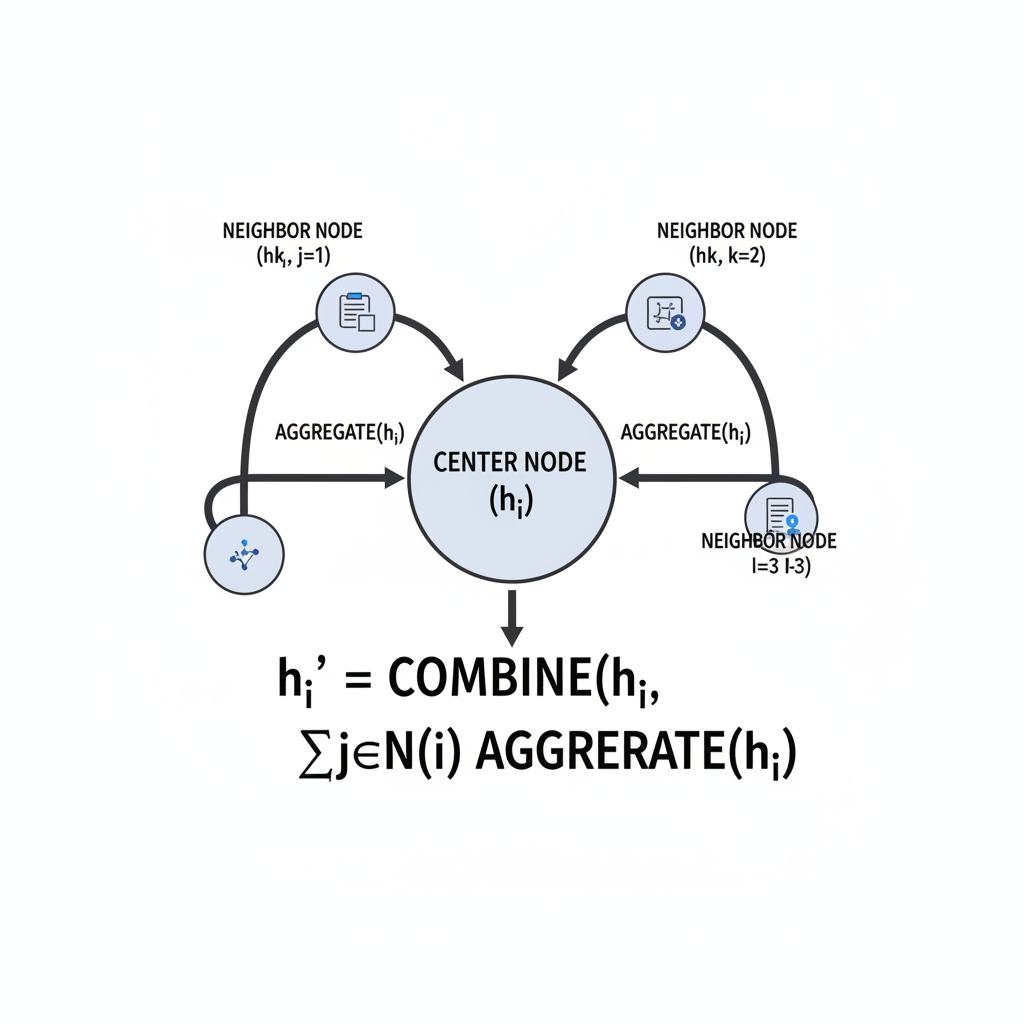

How GNNs Work: Message Passing

Central to GNNs is the state update function. A node updates its state based on: <br>1. Its own features.<br>2. Properties of connected edges.<br>3. The states of its neighbors.<br><br>The local transition function <i>f<sub>w</sub></i> aggregates this data, allowing information to diffuse through the network structure.

Proof of Concept: Subgraph Matching

Accuracy comparison on synthetic data showing GNNs significantly outperform Feedforward Neural Networks (FNN), especially as graph complexity increases.

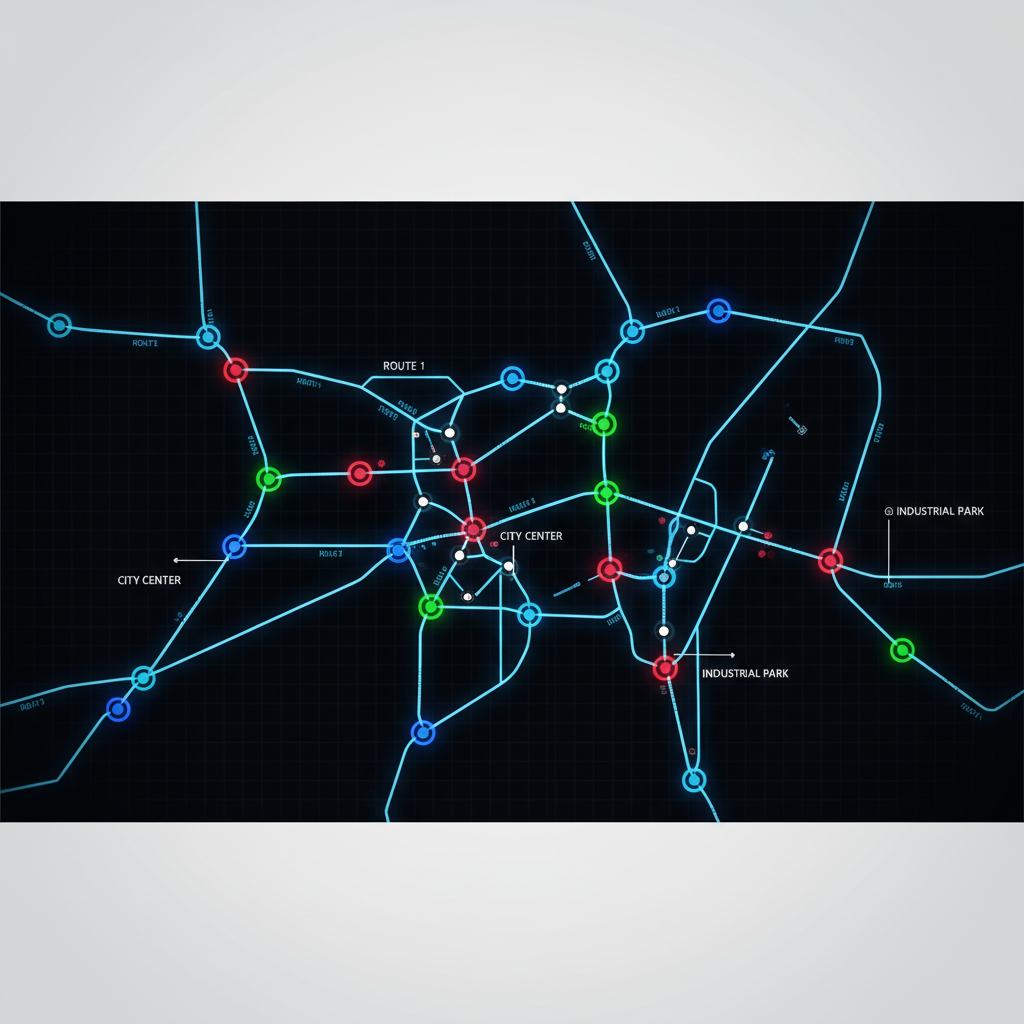

The Traffic Forecasting Challenge

Traffic forecasting is a quintessential <b>Spatial-Temporal</b> problem.<br><br><ul><li><b>Spatial:</b> Roads are not isolated; congestion propagates to connected neighbors (topology).</li><li><b>Temporal:</b> Traffic flow is dynamic and history-dependent (time-series).</li></ul><br>Previous models (SVM, LSTM) captured one aspect well, but not both simultaneously.

Solution: STGNN Architecture

The "Sandwich" Structure

The Spatio-Temporal Graph Convolutional Network (STGNN) avoids using recurrent networks (RNNs) entirely. Instead, it uses a stacked block architecture:

1. Temporal Gated Conv (Time)

2. Spatial Graph Conv (Space)

3. Temporal Gated Conv (Time)

<b>Benefit:</b> Fully parallelizable training and faster convergence compared to RNNs.

Experimental Setup & Datasets

BJER4 (Beijing)

• Urban traffic network<br>• 12 Major roads (dense)<br>• Data collected every 5 mins<br>• High complexity junctions

PeMSD7 (California)

• Highway network<br>• 228 Sensing stations<br>• 39,000 sensors (Speed/Flow)<br>• Tests scalability

Performance: STGNN vs Baselines

STGNN achieves the lowest error rates (MAE: 2.25), outperforming both statistical baselines (ARIMA) and complex recurrent methods (GCGRU).

Training Efficiency

<b>Faster Convergence:</b> Because STGNN uses convolutions instead of recurrent loops, it can compute time-steps in parallel. It achieves lower loss in significantly less training time compared to GCGRU.

Conclusion & Future Directions

Summary

<ul><li>GNNs effectively capture topological data lost by traditional ML.</li><li>STGNNs solve the traffic problem by modeling Space and Time simultaneously.</li><li>The convolutional architecture ensures scalability and speed.</li></ul>

Future Challenges

<ul><li>Handling <b>Dynamic Graphs</b> (where the road network itself changes).</li><li>Improving <b>Scalability</b> for massive city-wide networks.</li><li>Addressing <b>Heterogeneity</b> (different types of roads/nodes).</li></ul>

- graph-neural-networks

- deep-learning

- traffic-forecasting

- spatial-temporal-data

- stgnn

- machine-learning