The Evolution of Computers: From Mechanical to AI

Explore the history of computing from Charles Babbage's Analytical Engine to modern AI and Quantum Computing. Learn about vacuum tubes, transistors, and more.

The History of Computers

From Mechanical Gears to Artificial Intelligence

Index: Timeline of Evolution

Introduction: What is a Computer?

Early Mechanical Era (Babbage & Lovelace)

1st Gen: Vacuum Tubes (ENIAC)

2nd Gen: The Transistor Revolution

3rd Gen: Integrated Circuits

4th Gen: Microprocessors & The PC

The Internet Era & Connectivity

Modern Era: AI & Quantum Computing

Introduction

A computer is a machine that can be instructed to carry out sequences of arithmetic or logical operations automatically via computer programming. The history of computers isn't just about silicon; it tracks humanity's quest to automate calculation, starting thousands of years ago.

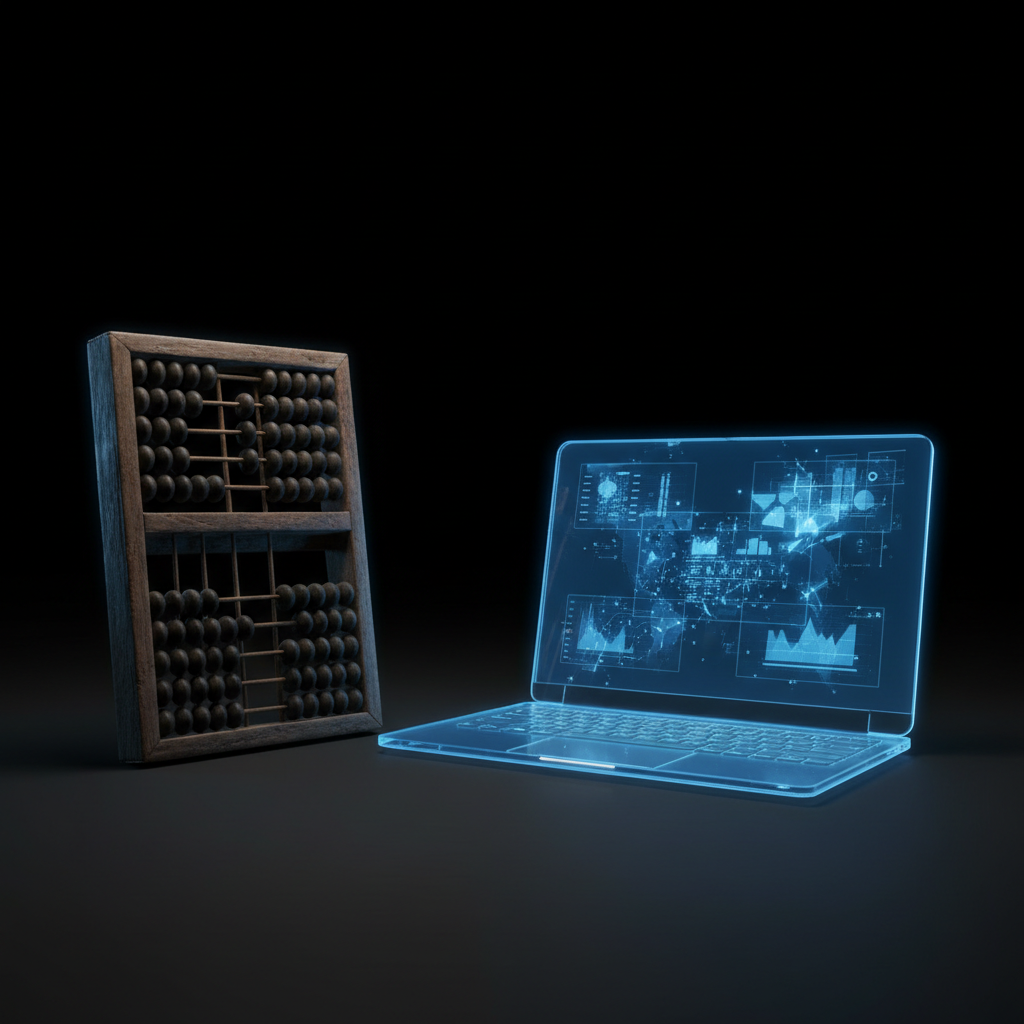

Early Mechanical Era

Before electronics, there were gears. Charles Babbage proposed the 'Analytical Engine' in 1837, widely considered the first concept of a general-purpose computer. Ada Lovelace is credited as the first computer programmer for her work on this machine.

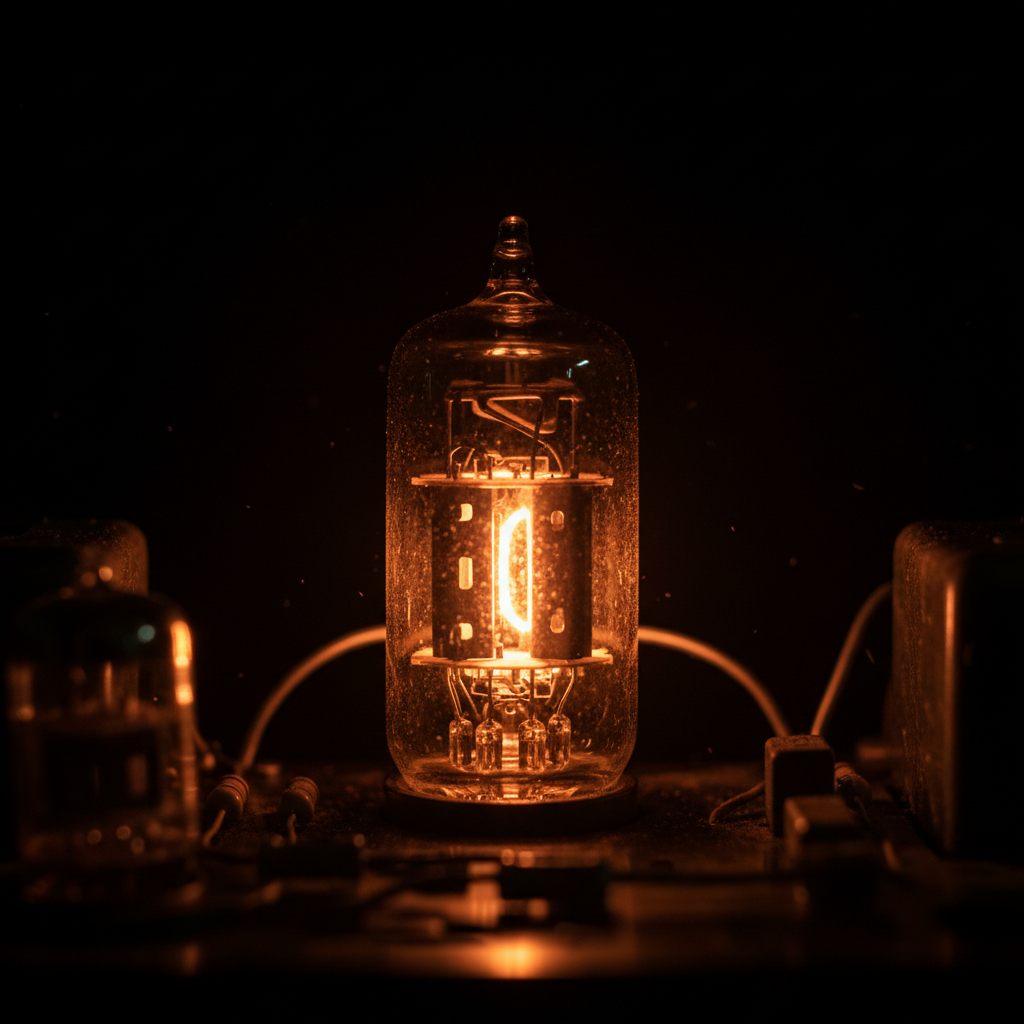

1st Generation: Vacuum Tubes (1940-1956)

The first electronic computers like ENIAC used vacuum tubes for circuitry. They were massive, often filling entire rooms, expensive to operate, and generated tremendous heat. ENIAC used nearly 18,000 vacuum tubes and weighed 30 tons.

2nd Generation: Transistors (1956-1963)

The invention of the transistor transformed computing. Transistors replaced vacuum tubes, allowing computers to become smaller, faster, cheaper, and more energy-efficient. This era saw the rise of Assembly languages and early operating systems.

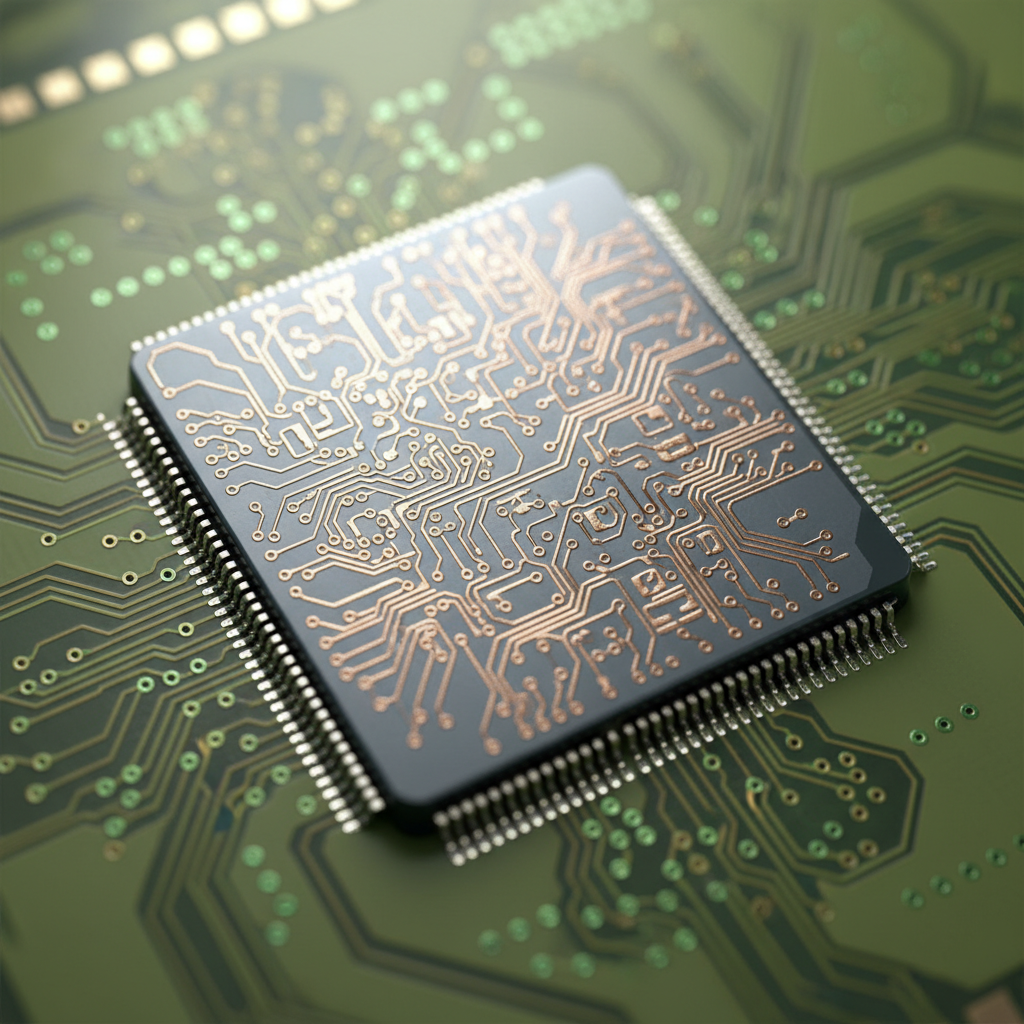

3rd Generation: Integrated Circuits (1964-1971)

The development of the Integrated Circuit (IC) placed many transistors onto a single silicon chip. Computers became drastically quicker and smaller. For the first time, users interacted using keyboards and monitors instead of punch cards.

4th Generation: Microprocessors & PCs

Starting in 1971, thousands of integrated circuits were built onto a single silicon chip—the microprocessor. This enabled the Personal Computer (PC) revolution. Machines like the Apple II and IBM PC brought computing into homes and schools.

The Internet & Connectivity

While hardware shrank, connectivity expanded. The invention of the World Wide Web in the early 90s turned computers into communication tools. Mobile computing (smartphones) in the 2000s put the power of early mainframes in our pockets.

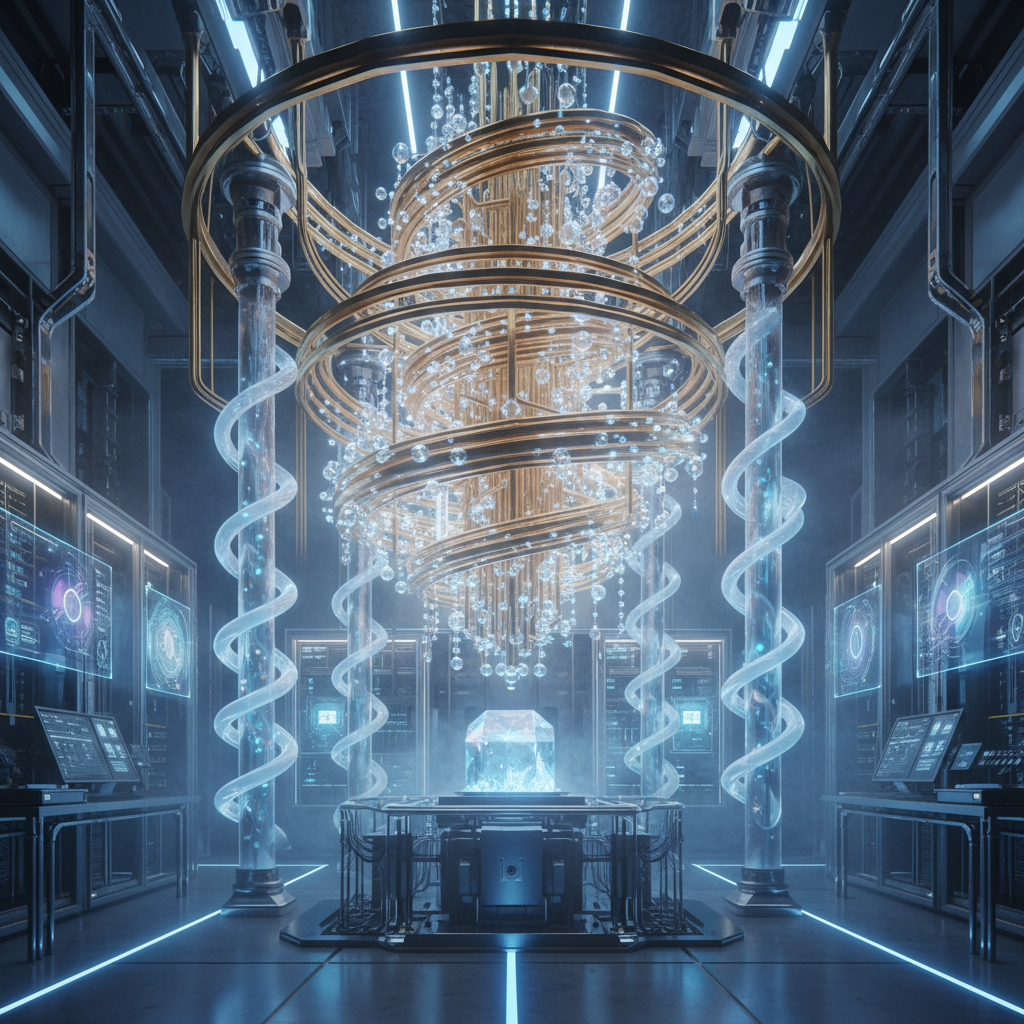

The Future: AI & Quantum

We are now entering the logic phase of Artificial Intelligence and Quantum Computing. Unlike binary computers (0s and 1s), quantum computers use qubits, aiming to solve problems complexity far beyond the reach of traditional supercomputers.

- computer-history

- evolution-of-technology

- eniac

- microprocessors

- quantum-computing

- artificial-intelligence

- tech-education