Visualizing AI Hallucinations: Semantic Entropy as Art

Explore Semantic Stutter: an artistic project visualizing LLM uncertainty and hallucinations through semantic entropy analysis and aesthetic blur effects.

Visualizing the hidden anxiety of Large Language Models through Semantic Entropy.

THE ILLUSION OF CONFIDENCE

Modern AI interfaces present a polished, authoritative facade. Even when an LLM hallucinates, it speaks with the same certainty as when it states a fact. 'Semantic Stutter' challenges this opacity by asking: What if we could see the machine hesitating?

TECHNICAL FOUNDATION: SEMANTIC ENTROPY

Based on research by Kuhn et al. (2023).

Measures uncertainty by sampling multiple answers for the same prompt.

If answers cluster into different meanings -> High Entropy (Confusion).

The Hallucination Detection Mechanism

We prompted the model 10 times per question. Questions with factual answers (Low Entropy) yield consistent clusters. Hallucinations (High Entropy) yield fragmented clusters.

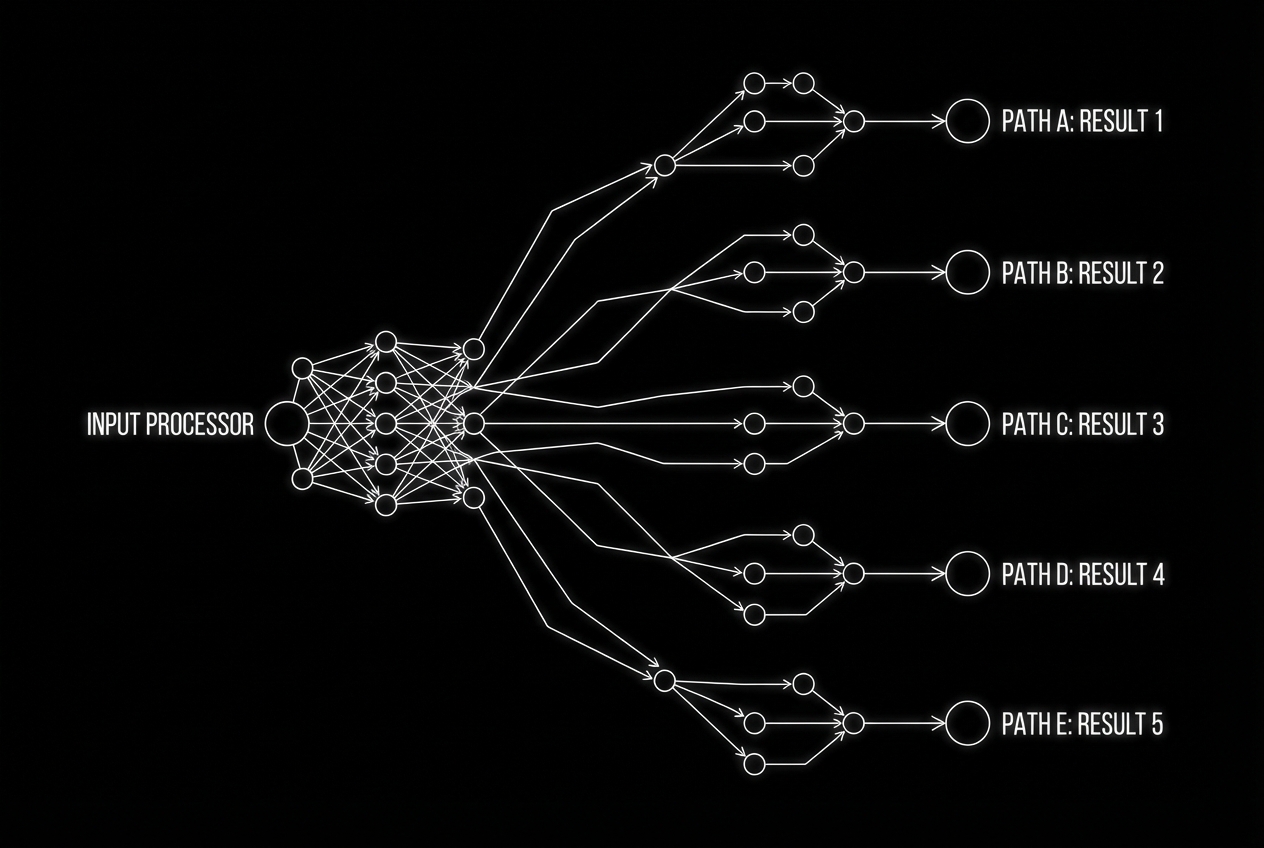

SYSTEM ARCHITECTURE

INPUT: User asks a question via microphone.

PROCESSING: Llama 3 generates 5 parallel responses.

ANALYSIS: System clusters responses by meaning.

OUTPUT: Entropy Score -> Blur Intensity.

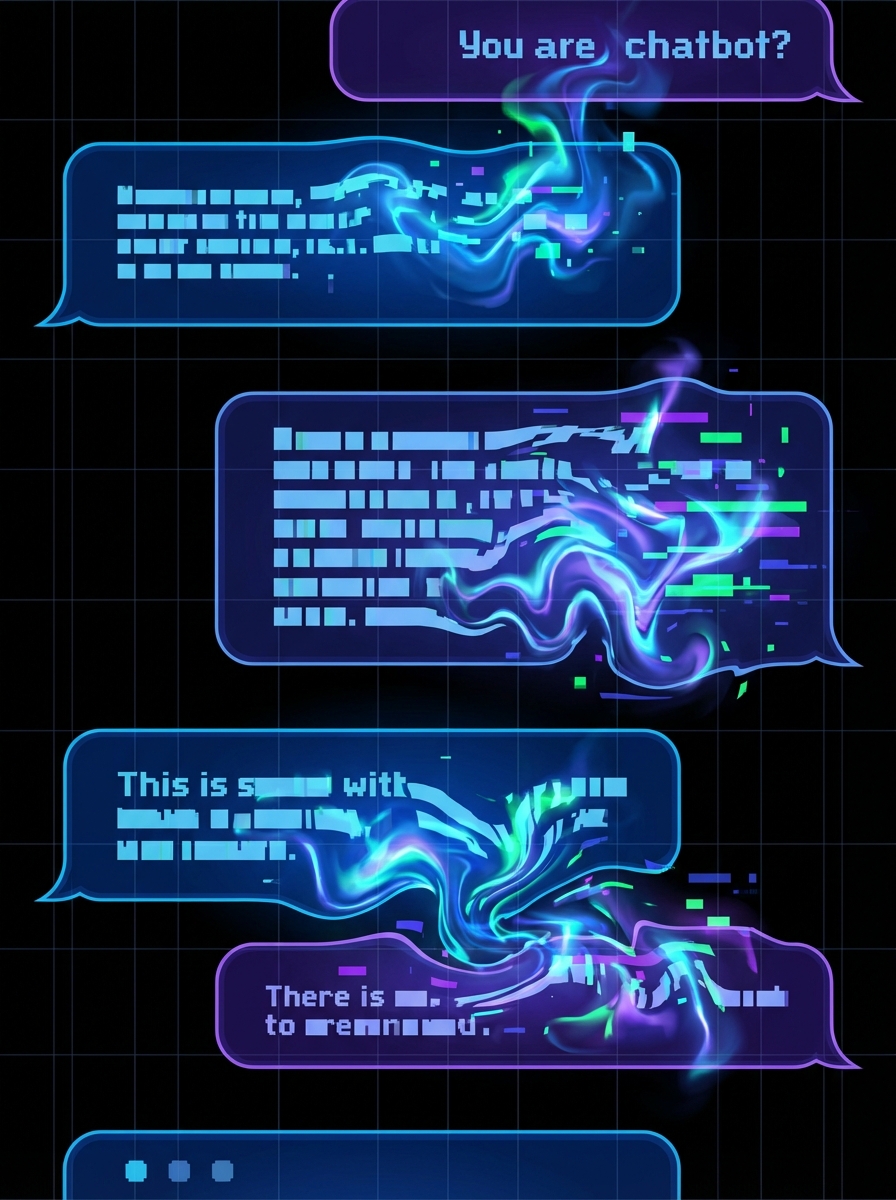

VISUALIZING UNCERTAINTY

The clearer the answer, the stable the text. The more the AI hallucinates, the more the text vibrates, overlaps, and blurs. This is 'Semantic Stutter'.

CASE A: LOW ENTROPY

Question: 'What is the boiling point of water?' Status: Factual. The output text remains sharp, static, and legible.

CASE B: HIGH ENTROPY

Question: 'Describe the secret meeting between Napoleon and aliens.' Status: Hallucination. The output is illegible, trembling, and layered with ghosting artifacts.

ARTISTIC STATEMENT

We often treat AI errors as 'bugs'. In this project, the error is the material. By forcing the machine to visually display its internal 'anxiety', we break the illusion of objective truth. The resulting unclear picture is not a failure of display—it is an accurate rendering of the lack of truth.

CONCLUSION

Semantic Stutter proves that ambiguity can be measured and visualized. It transforms the hidden statistical probability of 'truth' into a visceral visual experience.

- ai-hallucinations

- semantic-entropy

- data-visualization

- ai-art

- large-language-models

- machine-learning

- generative-ai