OpenMP and SYCL: A Guide to Heterogeneous Computing

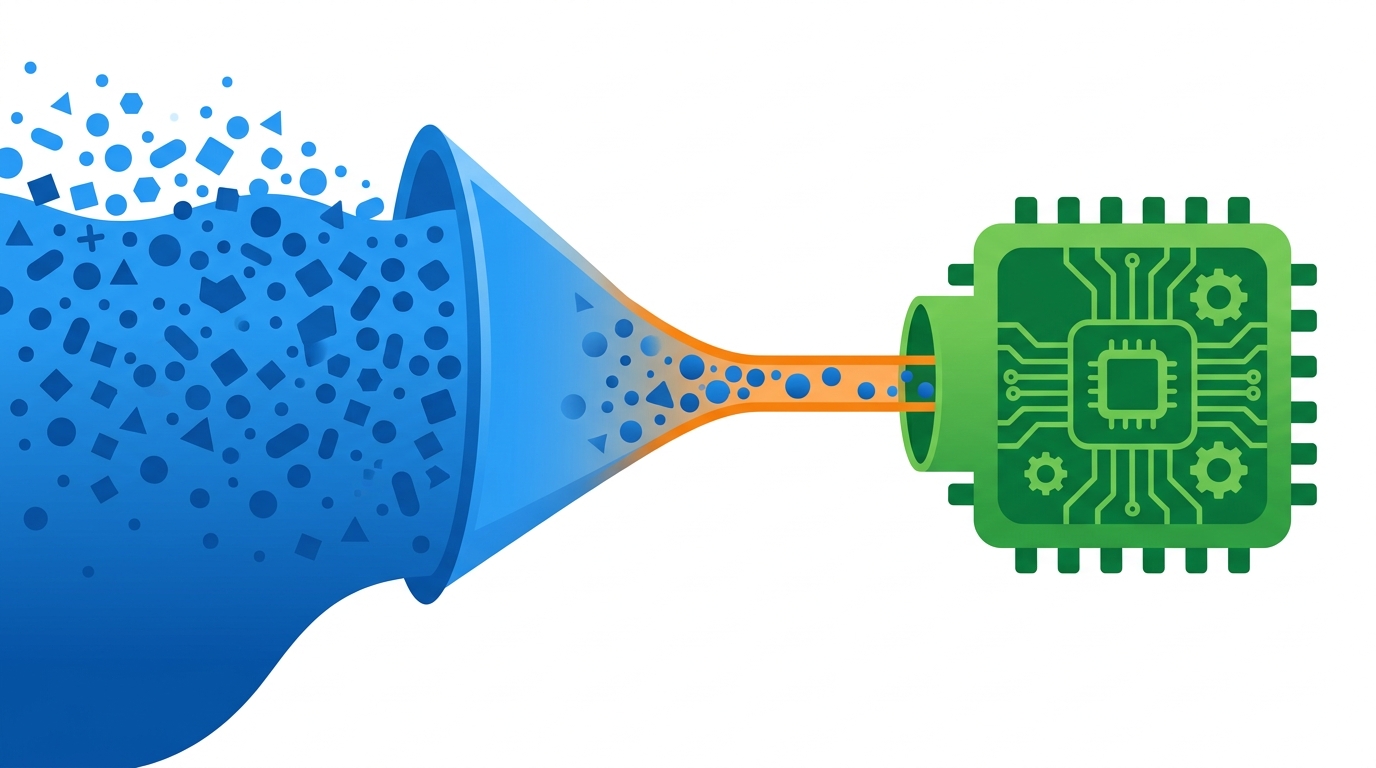

Explore the evolution of OpenMP and SYCL for GPU offloading, parallel computing models, and a decision matrix for selecting the right programming standard.

- openmp

- sycl

- parallel-computing

- gpu-offloading

- hpc

- c++

- heterogeneous-computing

- cuda